A while ago I was trying to track down the answer to "Why does this 512MB SODIMM just appear as 256MB?"

without any success. There are plenty of theories out there, some total rubbish, some semi-rubbish, some cogently argued,

but none I believed. Unfortunately, for reasons given below, this article also falls into the "My best guess"

category. What has prompted this article was an ebay purchase where the supplier messed up totally and instead of sending

me the two 1GB SODIMMS I'd ordered, he sent me two 1GB Micron SODIMMS which had different part numbers. When I plugged

them in in turn into a Neoware CA19 one appeared as 1GB and one as 512MB.

A while ago I was trying to track down the answer to "Why does this 512MB SODIMM just appear as 256MB?"

without any success. There are plenty of theories out there, some total rubbish, some semi-rubbish, some cogently argued,

but none I believed. Unfortunately, for reasons given below, this article also falls into the "My best guess"

category. What has prompted this article was an ebay purchase where the supplier messed up totally and instead of sending

me the two 1GB SODIMMS I'd ordered, he sent me two 1GB Micron SODIMMS which had different part numbers. When I plugged

them in in turn into a Neoware CA19 one appeared as 1GB and one as 512MB.

Way back when you could expand the amount of memory in your PC by buying specific integrated circuits and pushing them into the empty IC sockets on the motherboard. Alternatively you bought a memory board and stuffed it into a socket on the backplane of your system. Over time this changed towards the idea of the SIMM (Single-In-Line-Module) where the 8 or 16 ICs you needed were mounted on a small circuit board that plugged straight into the motherboard which already had the necessary memory controller and interface circuits.

The SIMMs and technology evolved and in order to help the motherboard keep track of exactly what was being plugged in PPD (Parallel Presence Detect) was introduced. There were also timing parameters that had to set by hand in the BIOS.

When the SDRAM PC100 standard was introduced it also included SPD - Serial Presence Detect. This was an onboard 128 byte EEPROM that included a wealth of information about the SDRAM which enabled the BIOS to automatically configure the memory controller for it without user intervention. The SPD specification has continued to evolve with each new generation of memory type. If you are interested in what is in the SPD EEPROM then a good summary is in this Wikipedia article.

This is really a complex topic which I'll over simplify.

The basic building block at the heart of the memory chip is a matrix of memory cells. They are accessed by a sequential operation of selecting a particular row and then a particular column. The row address and the column address are sent over the same address bus. Multiplexing the address in this way reduced the pin count on the IC. Also it reflects the way that the matrix is organised in that an entire row is read at once and then the column element selects just one or more bits of the row to route to the output pin(s). In the olden days the memory chips were organised x1 - they delivered a single bit of information on each read. i.e. If your memory bus was 16 bits wide you would need to use 16 ICs. Over time the IC's data width has increased with x4, x8 and x16 devices and the external data bus has migrated to 64-bit wide.

You can obviously increase the capacity of a (SO)DIMM by putting more ICs on it. This introduces the concept of having "banks" of memory - you now also have to select which "bank" of ICs you are accessing. As chip densities increased, as well increasing the data width, the chips can also have internal banks.

For example the PC133 SDRAM unbuffered SO-DIMM standard specifies two reference designs which can be populated by various types of x16 SDRAMs:

| Raw Card Version | SO-DIMM Capacity | SO-DIMM Organization |

SDRAM Density | SDRAM Organization | # of SDRAMs |

# of Physical Banks | # of Banks in SDRAM |

Address bits row/ col/ banks |

|---|---|---|---|---|---|---|---|---|

| A | 32 MB | 4MX64 | 64 Mb | 4M X 16 | 4 | 1 | 4 | 12/8/2 |

| B | 64 MB | 8Mx64 | 64 Mb | 4M x 16 | 8 | 2 | 4 | 12/8/2 |

| A | 64 MB | 8Mx64 | 128 Mb | 8M x 16 | 4 | 1 | 4 | 12/9/2 |

| B | 128 MB | 16Mx64 | 128 Mb | 8M x 16 | 8 | 2 | 4 | 12/9/2 |

| A | 128 MB | 16Mx64 | 256 Mb | 16M x 16 | 4 | 1 | 4 | 13/9/2 |

| B | 256 MB | 32Mx64 | 256 Mb | 16M x 16 | 8 | 2 | 4 | 13/9/2 |

| A | 256 MB | 32Mx64 | 512 Mb | 32M x 16 | 4 | 1 | 4 | 13/10/2 |

| B | 512 MB | 64Mx64 | 512 Mb | 32M x 16 | 8 | 2 | 4 | 13/10/2 |

One would hope that any PC133 compatible memory controller would support these reference designs.

The various SO-DIMM specifications are downloadable from the Jedec site but you have to register first.

Memory access is done via the Memory Controller. This essentially takes the linear address from the CPU and translates it into the complex series of signals necessary to access a specific set of memory cells on the chips on the SODIMM. Crudely, whatever the device, the linear address breaks down into a row, column and bank. In order for it to do this properly it has to be configured by the BIOS based on the information it reads out from the SPD(s) on the various memory sticks. All the SDRAM/DDR/DDR2 etc bit does is alter the complexity of the clocking as each new standard is designed to get the selected data out of the (SO)DIMM more quickly. (cf DDR standing for Double Data Rate).

The fundamental limitation is the memory controller. What is it capable of doing? Another question is has the BIOS initialised it properly? I would sort-of assume that the two are reasonably matched - if the memory controller can support X then the BIOS will set it up correctly. If it can't support X then what does the BIOS do? In some cases it sits there complaining loudly - one long endless beep!

In checking things out on the web you'll often find mention of 'high density' and 'low density' parts. I don't know if there is any formal definition of these terms but my take is that it is referring to the memory capacity of the chip used, not how many they've squeezed onto the SODIMM. As a result it's a little counter intuitive - a SODIMM packed with ICs may well be a 'low density' part.

The high density parts are likely to be those that appear towards the end of a particular standard's lifecycle as memory technology has improved and higher capacity chips become available.

So, if we assume the manufacturers haven't limited things further, what we end up with is really quite simple:

This is where I grind to a halt. A lot of thin clients use VIA processors and chip sets. VIA do NOT publish their datasheets. They are available under NDA but I get the impression that even then they are not easy to get hold of. You really have to be in the OEM volume market to get them. I also haven't found one for the SiS Northbridge used in the Nec D380. Ho-hum.

Having just said that the datasheets are not available, the one for the CLE266 has escaped into the wild!

Some key points from it:

It does state that "x4 DRAMs supported by SDR only"

The following table shows the Memory Address Map type encoding:

code Chip size Column addresses 000 16Mb 8-bit, 9-bit, 10-bit 001 64/128Mb 8-bit 010 64/128Mb 9-bit 011 64/128Mb 10/11-bit 100 -reserved- 101 256Mb 8-bit 110 256Mb 9-bit 111 256Mb 10/11-bit

Depending on the chip size and organisation (x4,x8,x16) they have page sizes of 2k/4k/8k.

So far I haven't had a reasonable set of working/not quite working SO-DIMMs to check out a CLE266 equipped thin client.

According VIA's website the CN700 memory controller supports DDR2 533/400 or DDR400/333/266.

The above having been said we now enter the realm of speculation as we have no datasheet.....

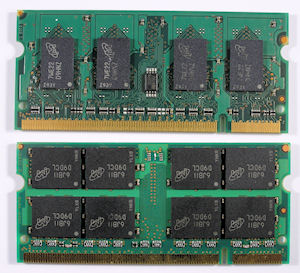

Having received two Micron 1GB SODIMMS - one organised 2Rx8 (8 chips per side)

and one 2Rx16 (4 chips per side) - I tried them out in a Neoware CA19.

Having received two Micron 1GB SODIMMS - one organised 2Rx8 (8 chips per side)

and one 2Rx16 (4 chips per side) - I tried them out in a Neoware CA19.

If we look at the respective datasheets we find the following basic information:

Parameter MT16HT... MT8HT... Refresh Count 8K 8K Row address 16K A[13:0] 8K A[12:0] Device bank address 4 BA[1:0] 8 BA[2:0] Device configuration 512Mb (64 Meg x 8) 1Gb (64 Meg x 16) Column address 1K A[9:0] 1K A[9:0] Module rank address 2 S#[1:0] 2 S#[1:0]

The three factors that stand out here are:

On the face it (1) shouldn't matter. The SODIMM taken as a whole still delivers 64 bits of data.

I assume (2) and (3) are a consequence of (1). I would guess the significant parameter is (3), but in the absence of the CN700 datasheet it's difficult to know if that is the reason. However Google does produce a few hits of "The CN700 does not support (SO)DIMMs with 1Gbit devices."

Checking the DDR2 specification from the Jedec site you can see that once the individual chip size is 1Gb or greater the number of banks increases from 4 to 8.

So my best guess is that the CN700 will only support 4 memory banks. If you are buying a 1GB memory stick for a CN700 based device, avoid any that have only 8 chips on board. That should improve the odds of what you buy actually delivering its stated capacity.

Although not directly applicable to any thin client that I'm aware of I thought I'd add a rider here for those of you who come across listings for AMD only RAM. As usual the majority of comments on various forums glibly dismiss this as 'marketing hype' - but it isn't. At one point AMD introduced a memory controller that supported an 11-bit column address when the then standard was 10-bits. The net result was that (SO)DIMMS that were made to support the extra column address bit would only work in the then current AMD CPU based motherboards and not in Intel motherboards.

I've tracked down some words from 2010 from the OCZ website thanks to the wayback machine. The red text below is my added emphasis.

The new PC2-5400 Titanium modules were designed exclusively for the AMD AM2 platform and are custom-tailored to the extended column address range of the AM2 memory controller. With a doubled page size, access penalties are reduced to ultimately improve system performance. Used on the AM2 platform, the architecture of these modules is particularly beneficial for large CAD model processing and memory intense graphics applications such as filters in Adobe Photoshop or video processing.With 11 column address bit support by the AM2 memory controller, the number of addresses in each row or page can be as high as 2048 individual entries for a page size of 16kbit. Unlike modules based on standard 10-bit column address chips with an "8k" page size, the new Titanium AM2 Special modules take advantage of the AM2 controller's feature set and provide a single rank solution with 2GB density using 16k pages. This allows the controller to stay in page twice as long compared to standard memory architectures, thereby achieving unparalleled performance.

Along with the footnote:

**Not compatible with Intel platforms